今日推荐开源项目:《无中生有 wheels》

今日推荐英文原文:《But, What Exactly Is AI?》

今日推荐开源项目:《无中生有 wheels》传送门:GitHub链接

推荐理由:学透一个东西有不少途径,其中一个就是把它制造出来。这个项目就是作者自己做的轮子——不使用其他的依赖而是自己空手制作。有的时候我们不可避免的会在工作时使用一些简单易用的轮子来组装自己的车,这会让我们的工作快上不少,但是如果想学透细节,可以试着挑战不依靠其他东西而只使用基础语言等重新实现它——有时这甚至比原作者的工作更难,这会让你学到很多实践才能了解到的知识。

今日推荐英文原文:《But, What Exactly Is AI?》作者:Rich Folsom

原文链接:https://towardsdatascience.com/but-what-exactly-is-ai-59454770d39b

推荐理由:AI 究竟是什么——用简单点的方法去介绍的话

But, What Exactly Is AI?

For years, people viewed computers as machines which can perform mathematical operations at a much quicker rate than humans. They were initially viewed as computational machines like glorified calculators. Early scientists felt that computers could never simulate the human brain. Then, scientists, researchers and (most importantly probably) science fiction authors started asking “or could it?” The biggest obstacle to solving this probem came down to one major issue: the human mind can do things that scientists couldn’t understand, much less approximate. For example, how would we write algorithms for these tasks:- A song comes on the radio, most listeners of music can quickly identify the genre, maybe the artist, and probably the song.

- An art critic sees a painting he’s never seen before, yet he could most likely identify the era, the medium, and probably the artist.

- A baby can recognize her mom’s face at a very early age.

The terms Artificial Intelligence(AI) and Machine Learning(ML) have been used since the 1950s. At that time, it was viewed as a futuristic, theoretical part of computer science. Now, due to increases in computing capacity and extensive research into Algorithms, AI is now a viable reality. So much so, that many products we use every day have some variation AI built into them (Siri, Alexa, Snapchat facial filters, background noise filtration for phones/headphones, etc…). But what do these terms mean?

Simply put, AI means to program a machine to behave like a human. In the beginning, researchers developed algorithms to try to approximate human intuition. A way to view this code would be as a huge if/else statement to determine the answer. For example, here’s some pseudocode for a chatbot from this era:

if(input.contains('hello'))

response='how are you'

else if (input.contains('problem'))

response = 'how can we help with your problem?')

...

print(response)

ML turned out to be a groundbreaking idea. So much so that nowadays, researchers and developers use the terms AI and ML almost interchangeably. You will frequently see it referred to as AI/ML, which is what I will use for the rest of the article, however, be cautious if you run into a Ph.D./Data Scientist type, as they will undoubtedly correct you.

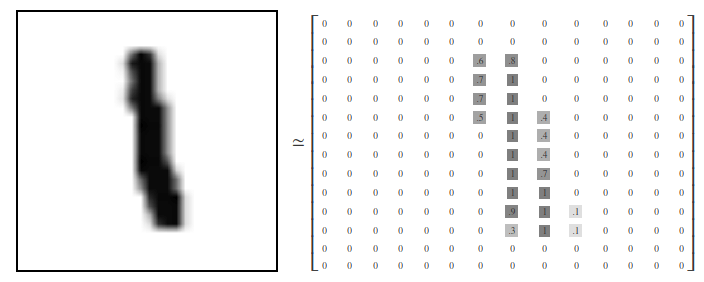

Before we go any further, we need to take into account one global truth for AI/ML. Input data is always numeric. Machines can’t listen to music, read handwritten numbers, or watch videos. Instead, these have to represented in a digital format. Take this handwritten number, for example:

https://colah.github.io/posts/2014-10-Visualizing-MNIST/

On the left is what the image looks like when represented as a picture. On the right is how it actually viewed internally by the computer. The numbers range between zero (white) and one (black). The key takeaway here is that anything that can be represented numerically can be used for AI/ML, and pretty much anything can be represented numerically.

The process of AI/ML is to create a model, train it, test it, then infer results with new data. There are three main types of learning in AI/ML. They are Supervised Learning, Unsupervised Learning, and Reinforcement Learning. Let’s look at these in detail.

Supervised Learning

In this case, we have input data and know what the correct “answer” is. A simple way to visualize the input data is a grid of rows and columns. At least one of the columns is the “label,” this is the value we’re trying to predict. The rest of the columns are the “features,” which are the values we use to make our prediction. The process is to keep feeding our model the features. For each row of input data, our model will take the features and generate a prediction. After each round (“epoch”), the model will compare it’s predictions to the label and determine the accuracy. It will then go back and update its parameters to try to generate a more accurate prediction for the next epoch. Within supervised learning, there are numerous types of models and applications (predictive analytics, image recognition, speech recognition, time series forecasting, etc…)Unsupervised Learning

In this case, we don’t have any labels, so the best thing we can do is to try to find similar objects and group them into clusters. Hence, Unsupervised Learning is frequently referred to as “clustering.” At first, this may not seem very useful, but it turns out to be helpful in areas such as:- Customer Segmentation — what types of customers are buying our products and how can we customize our marketing to each segment

- Fraud Detection — assuming most credit card transactions follow a similar pattern, we can identify transactions that don’t follow that pattern and investigate those for fraud.

- Medical Diagnosis — Different patients may fall into different clusters based on disease history, lifestyle, medical readings, etc. If a patient falls out of these clusters, we could investigate further for potential health issues.

Reinforcement Learning

Here, there’s no “right” answer. What we’re trying to do is train a model so that it can react in a way that will produce the best result in the end. Reinforcement Learning is frequently used in video games. For example, we might want to train a computerized Pong opponent. The opponent will learn by continuing to play pong and get positive reinforcement for things like scoring and winning games, and negative reinforcement for things like giving up points and losing games. A much more meaningful use of Reinforcement Learning is in the area of autonomous vehicles. We could train a simulator to drive around city streets and penalize it when it does something wrong (crash, run stop signs, etc…) and reward it for positive results (arriving at our destination).Conclusion

If you’re new to AI/ML, hopefully, this article has helped you gain a basic understanding of the terminology and science behind it. If you’re an expert in these areas, maybe this article would be useful in explaining it to potential customers who have no experience with it.下载开源日报APP:https://openingsource.org/2579/

加入我们:https://openingsource.org/about/join/

关注我们:https://openingsource.org/about/love/